The Shock of the New: NAEP Moved Online, but Did All Students Move With It?

Amid mostly flat national scores and a few striking performances of unknown long-term relevance (San Diego’s fourth-graders, Florida in math, an oddly large eighth-grade math plunge in Philadelphia), the real headline of this year’s NAEP results is the wider gap between high- and low-performing students.

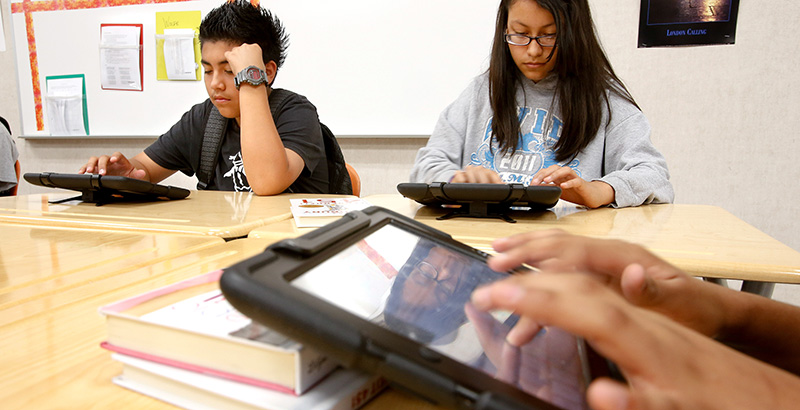

A few state education chiefs think they know part of the reason, at least in their states: The switch to an online test this year lowered the scores of local students, who had little or no experience taking digital tests.

In effect, they say, the test was less reliable.

When aligning results on the new Microsoft tablet–based tests to those taken on paper in 2015, the chiefs say, test officials made a nationwide adjustment that didn’t account for the effects of moving online in states with a larger number of poor students — who often lack access to computers — or that had previously used only paper tests.

“Our students, and those in a handful of other states that still give paper and pencil state tests, seemed to be at a disadvantage with the new online NAEP assessments,” said Kentucky Commissioner of Education Stephen Pruitt in a statement provided to The 74.

“It is an entirely different experience taking a test on a tablet than with the paper and pencil our kids are used to,” he said. “Going digital seemed to have an impact on results, especially in reading.”

Kentucky’s fourth-graders suffered a statistically significant four-point drop in reading scores, while its eighth-grade scores fell by three points, a decline that was not deemed statistically significant.

In Louisiana, where scores dropped by five points among fourth-graders in both reading and math, among the biggest declines in the country, state superintendent John White challenged the scoring methods.

“It is plausible that in making a uniform adjustment that’s the same for every student and every state, the federal government failed to acknowledge that the shift in technology has a different effect in different communities in different states,” he told The 74.

The political pressure on state education leaders to provide a rationale for scoring shortfalls is considerable, but an independent analysis of the scores by the Johns Hopkins Institute for Education Policy supported the contention that students in paper-testing states had lower scores.

Although it did not prove that “mode effects” — how testing methods affect students — caused scoring changes, the paper from Johns Hopkins found correlations: Scores declined in fourth-grade reading and math in 10 of 11 states that used paper-based state tests in 2016, including four of the five states with the largest declines in reading. By contrast, 19 states that already used online state tests — just under half — registered gains in reading, and 14 went up in math.

The researchers determined that “whether or not a state had used online testing” prior to NAEP predicted more of this year’s scoring changes than any other state characteristic. Because the National Center for Education Statistics (NCES), which administers NAEP, gave a paper test to a sample of students, the study was able to make what it called an “apples to apples” comparison using 2015 and 2017 paper results.

It found, for instance, that while Louisiana dropped 4.6 points on the 2017 computerized test in fourth-grade reading — a result that ranked near the bottom — its results on the 2017 paper test were a non–statistically significant 1.6-point drop.

(An important caveat: NAEP’s scoring methodology helped Louisiana relative to the rest of the country in fourth-grade math and eighth-grade reading, suggesting the effects may not be straightforward.)

The institute called on NCES to release more data, including each state’s paper results, to allow a fuller evaluation. “Even a one- or two-point change in either direction matters educationally and politically, even if not statistically, in part because NCES has historically emphasized these rankings and numbers,” the authors wrote.

Carissa Moffat Miller, the executive director of the Council of Chief State School Officers, said in a statement that the Johns Hopkins analysis “further confirms the need for additional information and continued research so every state fully understands their results and how this information should be used to drive decision-making in the future.”

NCES, which prepared for the move to digital testing for years and spent several extra months grading the new results, maintained that its scoring methodology was airtight.

In an April 9 letter to White, associate commissioner Peggy Carr said several studies led NCES to conclude that “the small number of mode effects observed were inconsistent within a state across grades and subjects and mostly not statistically significant. In fact, the amount of variation we observed was largely consistent with what might be expected from sampling variability.”

Carr wouldn’t comment on the Johns Hopkins study in advance of the public release of NAEP scores on Tuesday.

In a decision that has frustrated state leaders, Carr told The 74 last week she wouldn’t release state-level mode effects data publicly but would make them available to the chiefs. “The sample sizes were not designed to report at a level that a statistical agency would be comfortable enough with,” she said earlier. “But it’s enough to study.”

She said a full review would be available in a white paper that is expected to be released in a few months.

Given the effort to create a smooth transition to digital testing, it remains unclear why NCES didn’t use larger paper samples, which would have allowed statistically sound comparisons between paper and tablet users in each state and demographic breakdowns. Those measure would have provided a fuller understanding of how different types of testing methods may affect test takers differently.

“NAEP sample sizes for 2017 were designed to address the informational needs of the program during this transition year while managing the testing burden on states and districts and respecting the fiscal constraints on NAEP operating budgets,” said David Hoff, an NCES spokesman.

Get stories like these delivered straight to your inbox. Sign up for The 74 Newsletter

;)