As NAEP Turns to Electronic Tests, Officials Say Scores May Be Lower Due to Basic Computer Skills — a Problem That May Have Affected 1 in 10 Testing Administrations

Correction appended April 4

When results from the nation’s most highly regarded test of academic progress are released next week in Washington, D.C., they won’t include evidence from some states indicating that students performed worse than their peers — not as a result of an academic deficiency but because they had less experience using a computer.

Significant differences between students who took this year’s test on tablets and a small group who continued to take it on paper were found in about 1 out of every ten administrations of this year’s test, according to sources who discussed the results with officials from the National Center for Education Statistics (NCES), which supervises the testing.

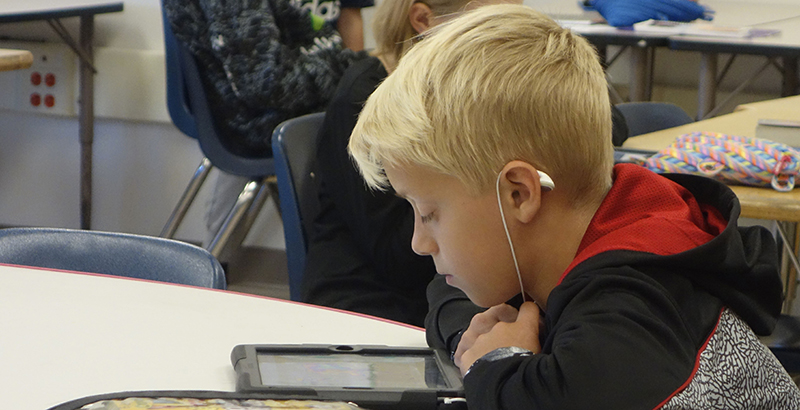

Every other year, the test, called the National Assessment of Educational Progress (NAEP), is given to fourth and eighth graders in reading and math in all 50 states; it was conducted electronically this year for the first time.

In their conversations with NCES officials, some state education leaders have expressed concern that high levels of poverty — often associated with less access to computers — and perhaps little to no history of online testing may have hurt students’ results. Some worried that NCES would not provide data detailing the effects of online testing, which would allow for apples-to-apples comparisons between states.

“We share many of the same questions that other states have voiced and recognize that transitioning to online with an exam like this one — when 50 states may have had 50 very different histories with online testing and differing student access to tablet technology — presents a real challenge for comparison,” Tennessee Commissioner of Education Candice McQueen said in a statement to The 74.

She added: “We look forward to hearing more from NCES.”

NCES, for its part, declined to comment on whether the mode of test-taking led to significant scoring differences in test administrations across the states.

Peggy Carr, the acting commissioner, said data comparing tablet and paper results would not be included in the April 10 release of NAEP test outcomes and trends — often referred to as “The Nation’s Report Card” — but would be shared with state superintendents who ask.

“I’m not going to report it in the Report Card, but I’m going to release that data to them if they want to know it,” she said.

She said the number of students taking the tests on paper were too few to provide reliable findings about the effects of taking the test electronically. “It was a study,” she said. “It was not part of the reportable results. The sample sizes were not designed to report at a level that a statistical agency would be comfortable enough with. But it’s enough to study.”

Carr said NCES planned to release a white paper later in the year that provides a full overview of the 2017 tests from a research perspective.

Unlike state standardized tests, NAEP tests representative samples of students in each state. Along with roughly 2200 students who took a test in each state on Microsoft Pro tablets, another 500 took it on paper.

Using both types of tests gave NCES both policy and statistical advantages. By mathematically merging the computer results with the paper ones on a single scoring scale used with previous tests, the agency was able to update what many call “the trend”: its tracking of student progress, dating to 1971, which is used nearly universally by researchers and educators in referring to issues like achievement gaps.

As in the past, NCES plans to report each state’s re-scaled scores. But Louisiana State Superintendent of Education John White suggested in a March letter to Carr that, absent the inclusion of paper-based scores, NCES risks applying an identical standard of “technology access or skill” to all students.

“I understand you want to maintain the national trend line — that’s smart,” White told The 74. “But is there a difference at the state level because we have more low-income kids or less experience with computers? The answer is, of course.”

A large library, dating to benchmark studies of the “digital divide” decades ago, has established that poor students are less likely to have access to computers both at home and at school. In recent years, states in the PARCC testing coalition experienced scoring declines on computerized tests.

But testing experts believe there are good reasons to make the switch. “The most obvious advantage is a closer match between the mode of assessment and the mode in which people do work,” says Randy Bennett, who holds the Norman O. Frederiksen Chair in Assessment Innovation at the Educational Testing Service, and has written extensively on technology and testing. “If reading, writing, and problem solving are being done routinely with digital devices in academic and work settings, then testing in the traditional paper mode is not going to give policy-makers an accurate (or valid) picture of what students know and do.”

Correction: Sources told The 74 that 1 out of 10 administrations of the NAEP test could reflect lower scores due to differences in students’ computer skills. An earlier headline to this story incorrectly stated that 1 out of 10 students’ scores might have been affected.

Get stories like these delivered straight to your inbox. Sign up for The 74 Newsletter

;)