Polikoff: It’s Easy to Blame Common Core for Lagging NAEP Scores. But the Evidence Doesn’t Really Add Up

Get stories like this delivered straight to your inbox. Sign up for The 74 Newsletter

This essay originally appeared on the Eduwonk blog.

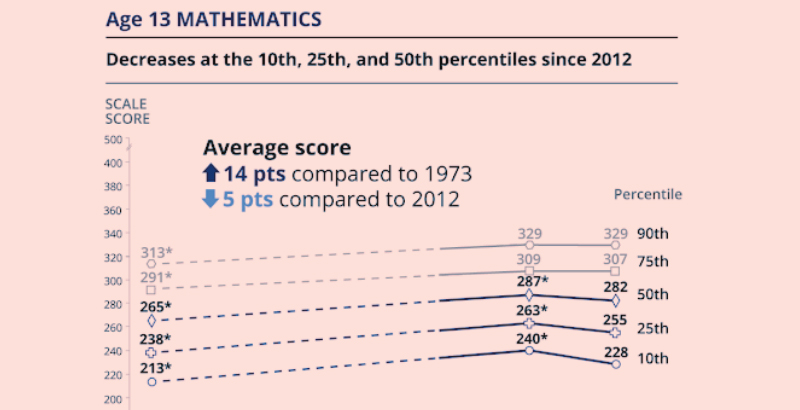

NAEP long-term trend scores dipped pretty substantially, and the alarm bells are going off. To be sure, the alarm bells should have been going off for quite a while — after big bumps in the early aughts (at least in mathematics), NAEP scores have been pretty much stagnant since around 2009 or so. And there have been troubling signs over at least the last decade that a) various longstanding achievement gaps have not been closing, and b) gaps between high- and low-performing students have been widening.

Worst of all, this evidence pre-dates the pandemic, which we have good reason to suspect will make things worse. It has clearly been felt disproportionately by those who were already underserved by our educational and social systems — students of color, those from low-income families, students with disabilities and English learners.

The questions on everyone’s mind are what caused this dip, and what we can do about it. As someone who’s studied the standards movement for the last 15 years, I was asked to give my thoughts about the possible impact of the Common Core and related standards on these NAEP outcomes.

The short answer to the first question is I don’t think Common Core per se could have had much if anything to do with this long-term trends NAEP dip. The most obvious reason is that the timeline doesn’t make sense. Common Core started to be adopted by states in 2011 and new assessments rolled out by 2015. Whether the standards are even being implemented in classrooms today is anybody’s guess, but there is a good deal of evidence that implementation is spotty, at best. In contrast, this long-term trends dip seems to have accelerated in the last two years. Could you squint and say, well, these are lagging results? Maybe, but that feels like a leap.

To be sure, the best evidence on the impact of Common Core and other college/career ready standards on student learning is far from overwhelming (and to be double sure, answering this question convincingly is incredibly fraught, if even possible). One short-term study found Common Core produced small positive bumps in achievement, but a longer-term effort focused more broadly on college- and career-ready standards found negative impacts that were getting worse over time. Could these results align with the latter study? Possibly, but I’d want to reserve judgment until we got another round of state NAEP data to see whether it supports that conclusion.

What standards/curriculum-related issues do I think could be at play here? One thing that’s worth paying attention to is the alignment of the NAEP long-term trends to current standards. The assessment has a framework that is intentionally not updated to align with changes in curriculum over time. But what if content that used to be taught when kids were 7 or 8 is now taught when they are 9 or 10? There is some suggestive evidence that these kinds of timing issues may at least partially explain main NAEP mathematics results, and one would imagine that these effects would be even larger for long-term trend NAEP.

Another plausible argument I’ve heard recently is about the widening gaps between high- and low-achievers. The argument goes that Common Core has often been interpreted as deemphasizing procedural knowledge in favor of more conceptual understanding (certainly I have heard this interpretation in my conversations with teachers), whether or not that’s a correct interpretation of the standards. But for students who might struggle in mathematics, not being exposed to this foundational procedural knowledge in early grades could have deleterious effects on their later mathematics achievement.

We need more evidence about student achievement, especially in light of the pandemic, and we need that evidence on a range of different kinds of assessments. We will probably never have a convincing answer to what caused this dip, but regardless of the reason, we need to focus on proven solutions — high-quality curriculum aligned with the science of reading, careful professional support for teachers, strategies to improve teacher working conditions and to support collaboration and data use, and direct student supports like one-on-one tutoring.

Morgan Polikoff is an associate professor of education at the USC Rossier School of Education. He studies standards, assessment, curriculum and accountability policy.

Get stories like these delivered straight to your inbox. Sign up for The 74 Newsletter

;)