Senate Inquiry Warns About Harms of Digital School Surveillance Tools, Calls on FCC to Clarify Student Monitoring Rules

Get stories like this delivered straight to your inbox. Sign up for The 74 Newsletter

Updated, April 5

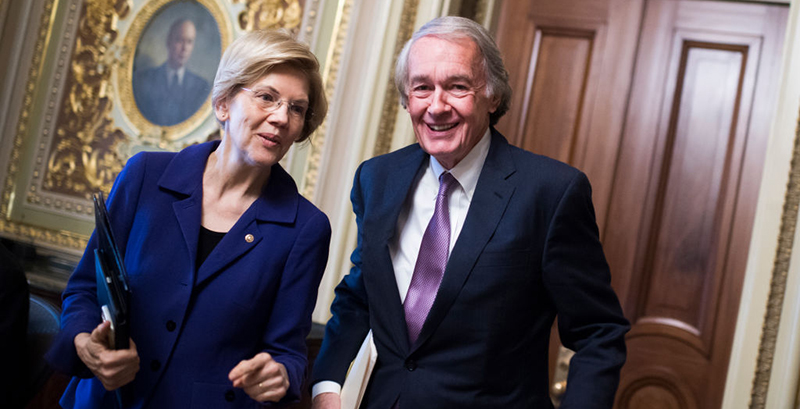

Democratic Sens. Elizabeth Warren and Ed Markey are calling on the Federal Communications Commission to clarify how schools should monitor students’ online activities, arguing in a new report that educators’ widespread use of digital surveillance tools could trample students’ civil rights.

They also want the U.S. Education Department to start collecting data on the tools that could highlight whether they have disproportionate — and potentially harmful — effects on certain student groups.

In October, the senators asked four education technology companies that keep tabs on the online activity of millions of students across the country — often 24 hours a day, seven days a week — to provide information on how they use artificial intelligence to glean their information.

Based on their responses, the senators said:

- The companies’ software may be misused to identify students who are violating school disciplinary rules. They cited a recent survey where 43% of teachers reported their schools employ the monitoring systems for this purpose, potentially increasing contact between police and students and worsening the school-to-prison pipeline.

- The companies have not attempted to determine whether their products disproportionately target students of color, who already face harsher and more frequent school discipline, or other vulnerable groups, like LGBTQ youth.

- Schools, parents and communities are not being appropriately informed of the use — and potential misuse — of the data. Three of the four companies indicated they do not directly alert students and guardians of their surveillance.

Warren and Markey concluded a dire “need for federal action to protect students’ civil rights, safety and privacy.”

“While the intent of these products, many of which monitor students’ online activity around the clock, may be to protect student safety, they raise significant privacy and equity concerns,” the lawmakers wrote. “Studies have highlighted unintended but harmful consequences of student activity monitoring software that fall disproportionately on vulnerable populations.”

An FCC spokesperson said they’re reviewing the 14-page report and an Education Department spokesperson said they “look forward to corresponding with the senators” about its findings.

Lawmakers’ inquiry into the business practices of school security companies Gaggle, GoGuardian, Securly and Bark Technologies is the first congressional investigation into student surveillance tools, whose use grew dramatically during the pandemic when learning shifted online.

It follows on the heels of investigative reporting by The 74 into Gaggle, which uses artificial intelligence and a team of human content moderators to track the online behaviors of more than 5 million students. The 74 used public records to expose how Gaggle’s algorithm and its hourly-wage workers sift through billions of student communications each year in search of references to violence and self harm, subjecting youth to constant digital surveillance with steep implications for their privacy. Gaggle, whose tools track students on their school-issued Google and Microsoft accounts, reported a 20 percent increase in business during the pandemic.

Bark didn’t respond to requests for comment. Securly spokesman Josh Mukai said in a statement that the company is reviewing the senators’ March 30 report and looks forward “to continuing our dialogue with Senators Warren and Markey on the important topics they have raised.”

“Parents expect that schools will keep children safe while in the classroom, on a field trip or while riding on a bus,” GoGuardian spokesman Jeff Gordon said in a statement. “Schools also have a responsibility to keep students safe in digital spaces and on school-issued devices.”

Gaggle Founder and CEO Jeff Patterson submitted a statement after this article was published. He said the company is reviewing the lawmakers’ recommendations “to assess how we can further strengthen our work to better protect students.”

“We want to ensure our technology is effectively supporting student safety without creating unintended risks or harms,” Patterson continued. “We have taken steps over the years to ensure effective privacy protections and mitigate bias in our platform, but welcome continued dialogue that will help make sure tools like Gaggle can continue to be used to support students and educators.”

Bark Technologies CEO Brian Bason wrote in a letter to lawmakers that AI-driven technology could be used to solve the country’s “terrible history of bias in school discipline” by removing the decisions of individual teachers and administrators.

“While any system, including AI-based solutions, inherently have some bias, if implemented correctly AI-based solutions can substantially reduce the bias that students face,” Bason wrote.

As to the question of whether their surveillance exacerbates the school-to-prison pipeline, the companies’ letters acknowledge in certain cases they contact police to conduct welfare checks on students. Securly noted in its letter that in some instances, education leaders “prefer that we contact public safety agencies directly in lieu of a district contact.”

Under the Clinton-era Children’s Internet Protect Act, passed in 2000, public schools and libraries are required to filter and monitor students’ internet use to ensure they don’t access material “harmful to minors,” such as pornography. Districts have cited the law to justify the adoption of AI-driven surveillance tools that have proliferated in recent years. Student privacy advocates argue the tools go far beyond the federal mandate and have called on the FCC to clarify the law’s scope. Meanwhile, advocates have questioned whether schools’ use of digital surveillance tools to monitor students at home violates Fourth Amendment protections against unreasonable searches and seizures.

In a recent survey by the nonprofit Center for Democracy and Technology, 81 percent of teachers said they used software to track students’ computer activity, including to block obscene material or monitor their screens in real time. A majority of parents said they worried about student data getting shared with the police and more than half of students said they decline to share their “true thoughts or ideas because I know what I do online is being monitored.”

Elizabeth Laird, the group’s director of equity in civic technology, said it has been calling on student surveillance companies to be more transparent about their business practices but it’s “disappointing that it took a letter from Congress to get this information.” She said she hopes the FCC and Education Department adopt lawmakers’ recommendations.

“None of these companies have researched whether their products are biased against certain groups of students,” she said in an email while questioning their justification for holding off on such an inquiry. “They cite privacy as the reason for not doing so while simultaneously monitoring students’ messages, documents and sites visited 24 hours a day, seven days a week.”

The 74’s investigation, which used data on Gaggle’s foothold in Minneapolis Public Schools, failed to identify whether the tool’s algorithm disproportionately targeted Black students, who are more often subjected to student discipline than their white classmates. However, it highlighted instances in which keywords like “gay” and “lesbian” were flagged, potentially subjecting LGBTQ youth to heightened surveillance for discussing their sexual orientation.

Amelia Vance, an attorney and student privacy expert, said she was intrigued that the companies pushed back on the idea that their tools are used to discipline students since the federal monitoring requirement was meant to keep kids from consuming inappropriate content online and likely face consequences for viewing violent or sexually explicit materials. She agreed the companies should research their algorithms for potential biases and would benefit from additional transparency.

However, Vance said in an email that FCC clarification “would do little at best and may provide counterproductive guidance at worst.” Many schools, she said, are likely to use the tools regardless of the federal rules.

“Schools aren’t required to monitor social media, and many have chosen to do so anyway,” said Vance, the co-founder and president of Public Interest Privacy Consulting. Some school safety advocates are actively lobbying lawmakers to expand student monitoring requirements, she said.

Asking the FCC to issue guidance “could actually be counterproductive to the goal of limiting monitoring and ensuring more privacy protections for students since it is possible that the FCC could require a higher level of monitoring.”

Read the letters from Gaggle, GoGuardian, Securly and Bark Technologies:

Get stories like these delivered straight to your inbox. Sign up for The 74 Newsletter

;)